For the first time in about three years, I’ve had two weeks off work. I’ve spent a lot of time just relaxing and taking a break from things, but I’ve also been able to get back to doing some graphics work. Ever since Vivendi bought Activision, the project that I was leading has been “put on hold”, so I’ve been back on the game team. It’s not as fun for me, that’s for sure, but luckily, I have my code at home to play with, so all is not lost! With the holidays, I’ve found some motivation to get back to it.

What have I been doing? Well, as I was approaching the break, I read through the course notes from the Practical Global Illumination with Irradiance Caching course at Siggraph last year. I thought the course itself was really good, and very clearly presented. After blitzing through the notes again, I thought I’d have a go at writing a ray tracer. It seemed simple enough at the time, but like most things, the devil is in the details.

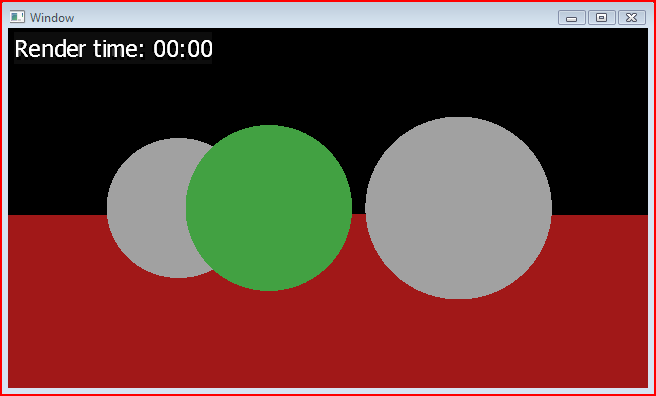

The first thing I did was to set up a really simple single-threaded ray tracer that just displayed the color of the surface it hit. This was fairly quick to get up and running once I had written a few supporting classes for the cameras and shapes. It’s not very glamorous, but it’s a start:

Next, I added point lights and directional lights, and wrote a new integrator to calculate direct diffuse lighting. Once you have a function to trace rays around the scene, it’s really easy to add hard-edged shadows. It looks a lot better than the solid color integrator I first used, but it still not very impressive.

Here’s the scene with a single directional light and hard-edged shadows:

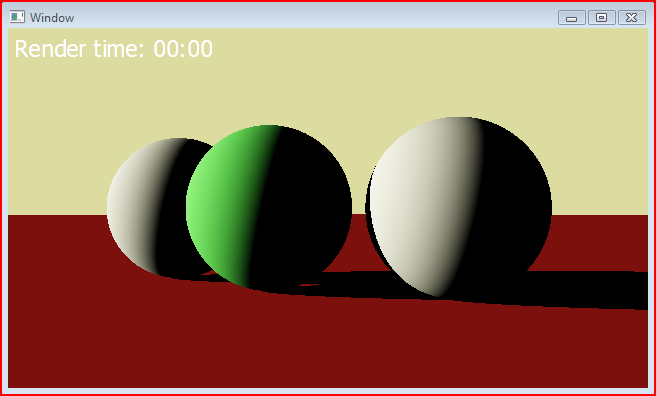

I wanted to flex the ray tracer a little bit, so and easy next step was to add an ambient occlusion integrator. Initially, I just used a function generate random uniform rays on the hemisphere around the hit normal, and used the ratio of misses to hits as the occlusion value. I found that this was really pretty noisy, so I tried using the length of the ray hits to weight the occlusion values. This definitely improved things, but it’s still pretty noisy. The obvious way to reduce the noise is to use more rays, but I’d like to find a cheaper way to do this if possible.

Here’s the scene rendered with the ambient occlusion integrator using 4096 rays per hit:

The first time I tried to render this scene using 4096 ambient occlusion rays per pixel, it took about thirteen minutes. I’ve never really used release builds at home and the settings weren’t great, so I tweaked some of the project settings, and defined out asserts. This got the time down to about ten minutes. I’m running these renders on my Macbook Pro, so I have a whole other core just sitting there doing nothing. Switching to using a multithreaded renderer basically sped the renders up by a factor of two.

Combining some of the concepts of the ambient occlusion integrator, and the direct diffuse integrator, I created a multi-bounce diffuse integrator. Like the direct diffuse integrator, it calculates the direct diffuse lighting at the hit point. Additionally though, it uses a Monte Carlo estimator to approximate the diffuse lighting integral over the hemisphere about the normal of the hit point. It can handle any number of bounces of indirect light, but the render time increases exponentially with each bounce added. Like the ambient occlusion integrator, it requires a large number of sample rays to get an acceptable level of noise.

Here’s the scene again with one bounce of indirect light, and 4096 rays per hit:

When a ray misses the scene, it looks up an environment color, which you can see in the background. Most of the indirect rays actually miss the scene, so this background color actually has a huge effect over the look of the scene. I should mention as well that I’m using a really simple tone mapping operator to map the HDR ray tracer values down to the 8 bit per channel texture.

While working on the ray tracer, I would often be playing around with the objects and lights in the scene. I quickly found out that it’s really not very fun to wait for the ray trace to complete before getting some feedback. I can reduce the number of indirect rays to make things quicker, but even at relatively low values, it can take a while to render the final scene.

I had already split the rendering of the scene into 32 by 32 blocks when I switched to a multi-threaded ray tracer, so it was a really simple extension to change the resolution in each of these blocks on the fly. I basically start things off by rendering with each ray covering 32 by 32 pixels, then when that completes, I immediately kick off another render at 16 by 16, and so on. Each successive render takes four times as long as the previous render, so if the 1 by 1 render takes about a minute, then you get the 8 by 8 render in about a second!

Here’s the scene rendered using 512 indirect samples, paused at the 4 by 4 resolution:

And here’s the scene at the conclusion of rendering (note that the time is cumulative of all the previous renders):

It’s pretty clear at the 4 by 4 resolution how the render is going to look, and it only took four seconds to get there, whereas the final scene took nearly a minute. The 1 by 1 resolution actually took only 40 seconds of that minute to render, but still, having the feedback within a tenth of the final render time seems worth the extra wait at the end.

That’s basically as far as I got over the past couple of weeks. Like many things I do, there seems to be more to do now than at the beginning. One of the things I’d really like to do is to be able to render out the lighting to radiosity normal maps. This would allow me to combine the static precomputed lighting in my DirectX10 engine. I could also output spherical harmonic coefficients for light probes which would allow me to render dynamic objects using the precomputed lighting.

Well, work starts back up in a couple of days, so the amount of time I can spend on this is going to be limited again, but I’ll post any significant updates. I have another article about the the lighting calculation on the the way, but it’s competing for my time!

Very interesting post. We have a class project where you have to implement a ray tracer, and like you, I too got really annoyed at having to wait a few minutes to get any results.

The book we used for the course (and something I highly recommend if you are looking into this: http://www.amazon.com/gp/product/1568811985 Realistic Ray Tracing, Second Edition (Hardcover)

by R. Keith Morley (Author), Peter Shirley (Author) )

Anyway, they talk about using bounding volume hierarchies to go through the scene, we ended up doing some polygon meshes for the ray tracing, and well, I had a 3k poly model that would take about 20 minutes to do a render on. I even had a dual core and was multithreading it.

Finally I bit the bullet and implemented a loose oct-tree structure (I didn’t really dig the whole binary/ BVH. I suppose oct-trees just make more sense for 3d space for me.

Anyway, cut the render down to ~3 seconds or so. As opposed to brute forcing through all the objects.

You only have 3 spheres and a plane, but testing all 4 surfaces for every ray adds up, especially if you could just do one ray shoot and determine if you actually hit anything in one if statement! (However, the cost per actual hit goes up, but yea…)

Thanks for the book recommendation. I haven’t really looked into performance improvements too much yet, but when I get into tracing against triangle meshes then I’m sure that will jump up in priority. It’s interesting to hear about the performance increase you got by using an octree though.

Good luck with your project!