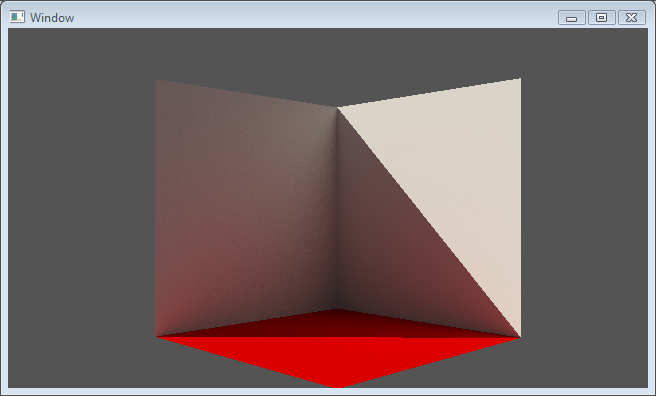

Well, you could point out a number of things to answer that question. There’s some pretty obvious aliasing, a random pixel on the ground which should be in shadow but isn’t, it’s noisy, boring etc. But that’s not my point. The point is: It’s too dark!

I know it’s too dark because I know how I rendered it, and I rendered it wrong. It still kind of looks acceptable (well to me at least) though. I’m not sure that I would say that it’s implausibly dark if I didn’t know it.

How many bounces are enough?

I rendered this image using a Monte Carlo estimator with two bounces of indirect light. Each bounce estimated the irradiance at the intersection point using 256 rays in a cosine-weighted stratified-sampling pattern. It’s the two bounce part that makes it wrong. Any light that bounced more than twice before heading toward the camera is totally ignored. Since this approach doesn’t converge on the correct solution to the rendering equation, it’s classified as ‘biased’.

How much does light that bounced more than twice really contribute to a scene? Of course that depends on the materials in the scene quite a bit, but in this case, there’s a noticeable difference. I rendered the same exact scene using the Monte Carlo estimator for the first bounce, but for the subsequent bounces I used a path tracer. By using Russian Roulette (a topic unto itself) to terminate the path, you can get an unbiased approximation of the irradiance.

Ok, great. It’s brighter, but is it actually correct? I was wondering about this, then I happened to come across an idea while reading Physically Based Rendering to compare the results of my integrators with something that has an analytical solution to the rendering equation.

I now present an analytical solution to the Rendering Equation!

(… in a very simple case)

Solving the rendering equation analytically for most scenes is just impossible, that’s why we have to rely on numerical methods like Monte Carlo Estimation. However, the book suggests a very simple scene for which it can be solved. The suggested setup is that of light bouncing around the inside of a sphere. The sphere emits light internally, and reflects it diffusely to other points on the inside of the sphere. Since the sphere is rotationally invariant and the reflections are diffuse, every point on the sphere reflects and emits the same radiance in all directions.

So how do you solve the rendering equation for this situation? It’s fairly easy. Recall the rendering equation:

In this setup, we are using a diffuse BRDF with reflectance d. It’s also normalized to maintain energy conservation

Also, as mentioned previously, the outgoing radiance in all directions is the same as the incoming radiance, so this reduces the rendering equation to:

Solving this equation for L is now pretty easy. First we have to convert from an integral over solid angles, to a double-angle version. The important thing to remember when doing this is to introduce the new sine term:

There’s a double angle trigonometric identity that makes this easier:

So we just need to integrate the following:

Integrate over theta:

This integral over theta is just 1, so now integrate over phi:

This integral is of course just 2 Π. So we’re left with a very simple equation:

Solving for L gives us the final expect radiance at every point in the sphere:

This intuitively makes sense. As d grows, so does L. When d is 1, all hell breaks loose, and when d is 0 all we are left with is the emitted light. Obviously d can never be greater than 1 or the energy conservation rule would have been broken.

The test application

Alright, so all I need to do now is make a test application that fires a bunch of rays around the inside of a sphere and compare the results to the analytical solution. Well… So I thought. Due to the curvature of the inside of the sphere, I found that a good number of rays I fired near the horizon were escaping the sphere.

Currently I apply an epsilon to the minimum ray intersection to try and prevent self-intersections and this causes problems. For now (and just for these tests), instead I’m pushing the ray starting point away from the intersection a small amount in the direction of the intersection normal. I made the sphere really big too. I’d welcome any better ideas for alleviating this problem.

For the tests below, I set the emitted light value to 1, and the diffuse reflectance, d, to 0.5, meaning that the outgoing radiance, L, should be 2.

Multi-bounce Monte Carlo results

You can work out from the radiance equation how different numbers of bounces of light will affect the final solution. In this case, I just ran my Monte Carlo integrator from 0 to 16 bounces and produced the following graph showing the percentage (of the expected result) absolute error.

You can see that the error starts off really high, but drops off pretty quickly as more bounces are added. Each subsequent bounce has less and less effect on the error reduction, as expected.

What you have to remember though, is that each bounce adds exponentially to the number of rays that have to be cast to achieve that error. In real-world situations we need to cast a lot of rays over the hemisphere in order to get a correct solution. To get under 5% error you’d need four bounces with this method. Even using a very modest number of rays per estimation quickly becomes unruly: 256 rays with 4 bounces = over 4 billion rays needed! Per pixel! Without anti-aliasing!

Path Tracer results

Ok, so how about the path tracer? In theory the path tracer should average out to zero error, but can have potentially very high variance. Here’s the average error over 1000 runs with different survival probabilities:

Well, it’s not zero everywhere, but it’s a pretty close in most cases. I think that the results obtained from this kind of method probably depend quite a bit on the quality of the random number generator used. I’m just using the stdlib version, so I’ll try switching it up at some point to see how it affects things. I haven’t shown it here, but the variance at low survival probabilities is incredibly high. This surely accounts for how far off the results are under about 10% survival.

The good thing about the path tracer is that it doesn’t require exorbitant numbers of rays. Yes, the variance can be high, but it can be reduced by tracing more paths. The net result is that it produces low error images much quicker than using standard Monte Carlo integration.

The End

Well, the point of this post really was: How do you know your integrator is correct? Comparing it to something simple that can be calculated analytically is not the be-all and end-all, but it’s a good start. There are many other things that could still be wrong even if your integrator gets good results against this simple test, like how you’re calculating sample directions, your PDF etc.

For me, being able to compare my integrators to a known solution definitely helped me to make sure that my path tracer was getting correct results.

Nice write-up & site! Is there an RSS feed or something so I can subscribe? 🙂

Good point. I seemed to have nuked the RSS link from the main page. I put one back, but it’s here.